|

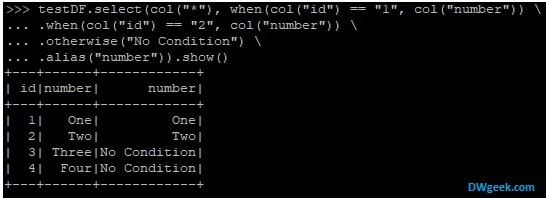

|and Structured Streaming for stream processing. | MLlib for machine learning, GraphX for graph processing, | |rich set of higher-level tools including Spark SQL for SQL and DataFrames, | |supports general computation graphs for data analysis. |high-level APIs in Scala, Java, Python, and R, and an optimized engine that | | Spark is a unified analytics engine for large-scale data processing. Scala> val strings = ( "spark-3.2.1-bin-hadoop3.2/README.md") In most deployments modes, only a single executor runs per node. The executors communicate with the driver program and are responsible for executing tasks on the workers. Currently, Spark supports four cluster managers: the built-in standalone cluster manager, Apache Hadoop YARN, Apache Mesos, and Kubernetes.Ī Spark executor runs on each worker node in the cluster. The cluster manager is responsible for managing and allocating resources for the cluster of nodes on which your Spark application runs. Not only did it subsume previous entry points to Spark like the SparkContext, SQLContext, HiveContext, SparkConf, and StreamingContext, but it also made working with Spark simpler and easier In Spark 2.0, the SparkSession became a unified conduit to all Spark operations and data. Once the resources are allocated, it communicates directly with the executors. Spark’s DataFrameReaders and DataFrame Writers can also be extended to read data from other sources, such as Apache Kafka, Kinesis, Azure Storage, and Amazon S3, into its logical data abstraction, on which it can operate.Īs the part of the Spark application responsible for instantiating a SparkSession, the Spark driver has multiple roles: it communicates with the cluster manager it requests resources (CPU, memory, etc.) from the cluster manager for Spark’s executors (JVMs) and it transforms all the Spark operations into DAG computations, schedules them, and distributes their execution as tasks across the Spark executors. Spark to read data stored in myriad sources-Apache Hadoop, Apache Cassandra, Apache HBase, MongoDB, Apache Hive, RDBMSs, and more-and process it all in memory. Spark SQL, Spark Structured Streaming, Spark MLlib, and GraphX Spark achieves simplicity by providing a fundamental abstraction of a simple logical data structure called a Resilient Distributed Dataset (RDD) upon which all other higher-level structured data abstractions, such as DataFrames and Datasets, are constructed. Second, Spark builds its query computations as a directed acyclic graph (DAG) its DAG scheduler and query optimizer construct an efficient computational graph that can usually be decomposed into tasks that are executed in parallel across workers on the cluster In particular, data engineers will learn how to use Spark’s Structured APIs to perform complex data exploration and analysis on both batch and streaming data use Spark SQL for interactive queries use Spark’s built-in and external data sources to read, refine, and write data in different file formats as part of their extract, transform, and load (ETL) tasks and build reliable data lakes with Spark and the open source Delta Lake table format. Specify JDBC Connector for Spark classpath Save JDBC Connector inside Spark root directory Querying with the Spark SQL Shell, Beeline, and Tableau Speeding up and distributing PySpark UDFs with Pandas UDFs Evaluation order and null checking in Spark SQL Spark SQL and DataFrames: Interacting with External Data Sources Reading an ORC file into a Spark SQL table Reading an Avro file into a Spark SQL table

Reading a CSV file into a Spark SQL table Reading JSON files into a Spark SQL table Reading Parquet files into a Spark SQL table Data Sources for DataFrames and SQL Tables Temporary views versus global temporary views

Spark SQL and DataFrames: Introduction to Built-in Data Sources Typed Objects, Untyped Objects, and Generic Rows Using DataFrameReader and DataFrameWriter Spark’s Structured and Complex Data Types

Transformations, Actions, and Lazy Evaluation

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed